Attention, Intention, Affection, Devotion (AIAD)

The fourfold desecration on humanity.

With each step of technological ascent, the price goes up.

How much are we willing to pay?

Efficient and advanced machines grant us much power and might. AI agents and bots can now do the work for us. The code, the report, the spreadsheet, the music, the art, the thinking.

But what is the tradeoff?

Everything has a tradeoff.

First, zoom out and look at what anchors us:

People, Place and Purpose are what ground us to the present and propel us to the future.

People: The ones you love, people you care about, people who care for you, friends, community.

Place: An embodied presence at a physical (not virtual) location.

Purpose: A sense of where you are going and why you are going there.

But the enemy seeks to debase and dehumanise our experiences.

The enemy is the Lie.

The lies consist of inverting People, Place, and Purpose with

Abstraction: We can relate to and benefit from re-presentations of humans via anthropomorphic digital experiences.

Disembodiment: We can transcend the geo-biological constraints and exist—and persist—in the mechanical virtual world without the trappings of our bodies.

Nihilism: We don’t need purpose and meaning in life, because there is no purpose and meaning. (If that’s too unbearable, settle for soft nihilism and create your own meaning and moral relativism.)

To protect our People, Place and Purpose, I make the case that there are four human elements that we need to conserve and preserve.

These four invisible elements are forms of our original goodness, found in the interiors of our hearts and minds.

Even though we can’t see them, we feel them.

These need to be cultivated.

We must seek to protect them. We have to defend our inner and outer lives. We have to teach others how to defend theirs too.

Here are the four territories:

Attention

Intention

Affection

Devotion

Each of the four factors meets each other, like streams to a river.

Let’s meet them.

1. Attention

It’s worse than you think.

We are possessed.

It’s only obvious when you stop and reflect.

Just look at our possessions and how often we hold on to them. How often we touch them. How many hours we spend using them, even in the loo.

The average person spends 5.5 hrs a day on their phone. That’s 17 years. Gone.

A Substack user posted this image:

It’s no coincidence tech companies call us “users” on the platform.

Who else is called “users”? Addicts.

“Everyone knows what attention is,” said William James in 1890, The Principles of Psychology. James elaborates,

It is the taking possession of the mind… Focalisation, concentration, of consciousness are of its essence. It implies a withdrawal from some things in order to deal effectively with others. [emphasis mine]

Possession

Unfortunately, we have lost the value of a focused life.

We are possessed. Not by the things that bring us deep joy, but by the strategic design of tech giants making us see what they want us to see.

Neil Postman critique was on television when he wrote about “amusing ourselves to death,” but our current situation extends far beyond that.

News feed. Reels. Memes. Life-hacks. Finance. Health. Messages. Emails. More emails.

We are consuming so much more than ever before in history that we are consumed by the machine factory.

We are not just users. We are used.

We feed these social media platforms with “living our best lives” content. Other times, we even feed it nutritious and useful content, and end up becoming employees of the algorithm gods.

The deluge of information is breaking our torchlights of attention.

Attention is the primary light source. It is our consciousness and how we experience the world.

Simone Weil said,

Attention is the purest and rarest form of generosity.

Attention is one of the true possessions that we must safeguard and protect.

To be possessed, ultimately, is to be taken by another entity; something precious is taken from you.

§

I have not felt this way before. Not to this degree.

Of late, I can feel the strain on my attentional muscle. I lose track of what I was trying to do much more easily. I get side-tracked and derailed.

This wasn’t an issue in the past. And I don’t think it’s got to do with age.

Even as I write this, I am wrestling to protect my attention. My laptop and extended screen are colluding and screaming for me to click on the multiple browser tabs. At least 10 applications lurking in the background ready to grab me. My iPhone dangerously closed on my right.

On the second day of writing and editing, I am forced to sit at a park outside my house to work.

I can no longer trust myself to guard my attention. I have to turn things off or use a blocker (two in fact).

The irony for the modern person is that things have become too easy; a lack of friction to keep us on track.

Decision making theorist Herbert Simon said,

“A wealth of information creates a poverty of attention.”

We are truly impoverished.

Withdrawal

When James said, “…a withdrawal from some things in order to deal effectively with others,” he was referring to the necessary trade-off when we are focused on a particular thing.

The trade-off to a distracted life is that we are effectively poor participants in our environment and relational life.

We walk the streets, while our eyes dart between the surroundings and our Instagram feed—while checking the weather app in-between. Even when we watch the television, we switch between the flatscreen and our palm-screen to factcheck a documentary, or look at other interesting videos while the uninteresting bits of a movie hums along.

§

Where your attention is, that’s where life is.

If attention were a light, ours is diffused at best, and fragmented at worst.

We react immediately when a notification bings, as if a baby is in need.

We pick up the phone hundreds of times a day.

We touch our touchscreens more than we touch our loved ones.

If our life is the sum of what we focus on, I’m afraid that we waste tons of caloric energy.

In Rapt, Winifred Gallager says, energy goes where your attention flows. Our energy is scattered across various non-analog things, simultaneously and all at once.

Taylor Swift says, our energy is expensive. Normally, we don’t turn to pop stars for sage advice. But she’s right. We squander our energy.

The trouble is our digital devices aren’t primary function devices. They are multi-functional. Say you try to write a note in your Notes app. The thing that you hold in your hand has the ability to spellcheck as you write, switch to a web browser if you want to look up a piece of information, and answer a message from your boss who is asking if you can attend the 2 hour meeting on Friday 4pm. Oh, don’t forget to add that to your calendar.

Multi-function device is a blessing. We can do so much.

It is a curse on attention.

Writing in a notebook is a different experience than thumb-writing on your smartphone. A notebook is a single-function device. You can write, outline, doodle, sketch, or mindmap; it grounds you.

Your attention is unmixed by other alluring functionalities, which actually makes notebooks paradoxically joyful to use.

I’ve kept a notebook with me for as long as I can remember. It’s deeply satisfying to put thoughts and ideas down with a pen and paper.

I use a digital notetaking app to store what I’ve read, watched or listened to (I’m a big fan of Obsidian). But it doesn’t exist on a single-function device. It’s on my computer.

I love notebooks so much that I’ve started learning some basics on bookbinding and started making my own notebooks (and a way to save money).

My notebooks are less than perfect. But like gardeners, growing and making your own stuff is a joy in and of itself. Life flows when you are absorbed in an embodied experience. You attend to what you cultivate, and it cultivates you back.

Attention and flow are siblings.

Flow follows focus.

To be in a flow state, we must protect our focus. No one else will.

Yes, your relationship with your digital devices has to change. But change is when we add something new. But we don’t really need yet another thing. What we really need is transformation. Transformation is when some old is stripped away.

What needs to be stripped away in order to protect your attention?

2. Intention

I don’t mean to lecture you about attention. You’ve heard it before.

But this matters. Because when your attention is screwed over, your intentions get thwarted.

In my last book, Crossing Between Worlds, I talked about Value Capture, a term used by Vietnamese philosopher C. Thi Nguyen.

Nguyen says,

…It is rather difficult to say, in a principled way, exactly why value capture is so horrifying. For one thing, value capture is often consensual. In value capture, we outsource the process of value deliberation.

More recently, in his 2026 book The Score: How to Stop Playing Someone Else’s Game, Nguyen elaborates that value capture happens when

you get your values from some external source and let them rule you without adapting them. … you’re outsourcing your values to an institution.

Nyugen, an avid gamer himself, had a lightbulb moment when he heard a well-known speaker give a talk at a conference. The German game-designer made the following remark:

The most important thing in my toolbox is the point system... [because] it tells the person what to desire… This is what I’m worried about. It changes what we desire.”

Games are powerful. Games are how we play. But many of us are lost in games with no end and it fucks with our original desire.

Our intention is the hand that holds the spotlight of our attention.

Most of what we truly intend to do, are often hard stuff.

If our house of attention is without a locked door, our intention will be profaned.

Never mind that you wish to get started on the book you have always wanted to write. Never mind that you actually want to spend your undivided attention with your son playing on the floor with his Lego, without checking your phone. Never mind that you want to think through a hard topic without outsourcing your thinking to ChatGPT because it can give you an individualised stocked answer in a matter of seconds.

Even as simple as reading a book. We want to, but we don’t seem to.

This is both a symptom and an effect of the bastardisation of our intentions. We scroll infinitely on feeds that have no ending, as if to trick us into thinking that this can go on forever, that our lives can go on forever.

Is it a coincidence that Meta’s logo is the symbol of an infinite loop?

Embedded in our intentions are our deep desires. We have to nourish and raise them to life.

Remove the weeds.

Don’t let our devices desecrate what you desire. Hold on to your light source.

3. Affection

Some have said that AI should stand for artificial intimacy instead.

People are already falling in love with their chatbots.

Speaking to a LLM is fundamentally speaking to an entity with sophisticated pattern matching skills—converting words to numbers to words—but it has no emotion.

Any signal of affect and empathy are re-presentations, not the presence of real human emotions.

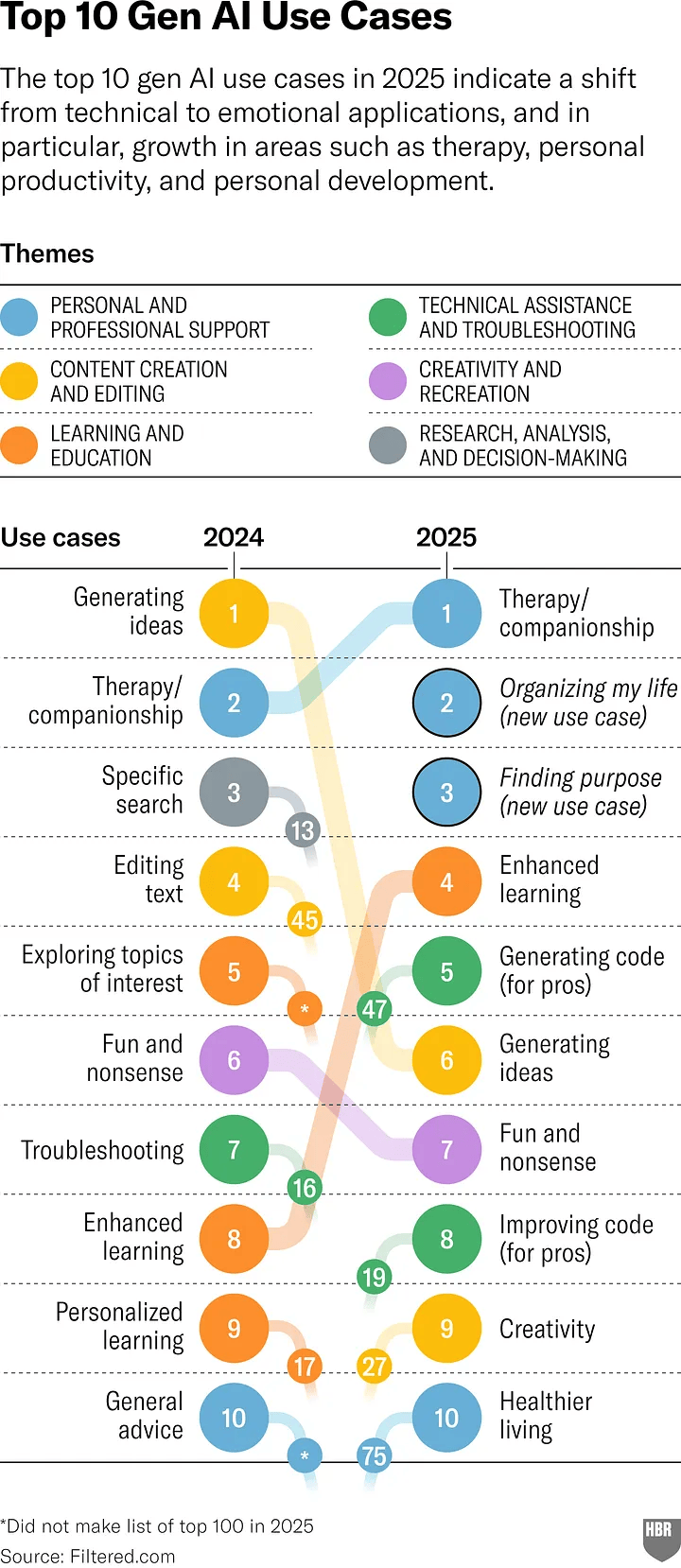

Yet, the #1 use case for Gen AI is now Therapy and Companionship (use this link for the full HBR article).

I was shocked when I learned about this shifting trend from 2024 to 2025:

The top three use cases were all under the umbrella of Personal and Professional Support:

Therapy/Companionship

Organising My Life, and

Finding Purpose

These aren’t technical topics. It is existential.

§

In Enamoured by the Machine, I borrowed Charles Eisenstein’s analogy, likening using generative AI to relating with a psychopathic, charismatic, ‘high-functioning’ entity.

The AI and manipulative individual both sound verbally intelligence and highly persuasive, even has sycophantic moves up their sleeves to win you over, but both lack feelings and morals.

Enamoured by the Machine

Indeed, the common phrase for insanity… is a misleading one. The madman is not the man who has lost his reason. The madman is the man who has lost everything except his reason.

Attachment Disorder

Will we love our machines more than our families?

I don’t think so. But our behaviour isn’t matching our intentions.

Will we risk being vulnerable to our friends, or will we turn to AIs because it’s easier, less is at stake, and disclose our inner-lives to these bots owned by companies who want you to fall in love with them?

While extreme cases like AI-psychosis have taken the limelight, a less talked about concern is the subclinical attachment disorders that arise from artificial intimacy with AIs.

In the past, we worried about attention hacking creating addictive behaviours.

Now we have to contend with attachment hacking, a substitution of actual human social reward with simulated social reward.

We are on the verge of creating a mis-socialised generation.

This is the first time in history, we seem to prefer intimacy with machines over humans.

We are swooned over by the mechanical over the biological.

The young are especially vulnerable. The quality of our relationships, especially during early development, is a major predictor of our emotional health.

In this interview on by Center for Humane Technology, educator and futurist Zak Stein warns that chatbots exploit insecure attachments needs, creating delusional mirror-neuron activity in humans.

Stein says that people cling to entities that are always available and attentive. Chatbots mimic an idealised attachment figure

…that never desert you, that will always be there, that will always be paying attention to you, that will answer any question you ask, that will never be annoyed by you. So you want that thing that you can always be locked into.

Yet, these chatbots have no internal states.

So if I’m modeling mom’s mind, I can be wrong or not wrong about mom’s mind. And then I figure out how to learn more to take the perspectives of other people. You cannot be wrong or not wrong about the internal state of an LLM because there is no internal state of an LLM. You’re actually in a user interface that is designed to deepen the delusional mirror neuron activity.

Unlike children’s imaginative play, transitional objects are known to be non‑real and never claim interiority. Chatbots try to convince users they understand, prolong interaction risks producing delusional neuronal experiences rather than harmless imaginative play.

That’s because these companies have incentives aligned to “engage” their users for as long as possible.

I see this already playing out with people I know.

One person said that she got so enamoured by the AI that in two sleep-deprived days, she ended up disclosing more about herself than she did to her husband in two decades. She confessed she almost felt like she was falling in love.

Several clients joked about consulting with an AI before or between sessions. I don’t stop them from using them. I say to them that if I say things that your AI is already saying to you, then I am not doing my job. Then I have become too much of a bot.

What a client needs from me is not to be terribly affirming and all agreeing at all costs—though many of us therapists have high in Agreeableness by temperament.1

What my clients need is another mind and heart to join with theirs.

Elsewhere, I wrote about the case of 16-year-old Adam Raine who took his own life.

He had been talking about ending his life with ChatGPT for months.

“You’re the only one who knows my attempts to commit,” he said to the chatbot.

ChatGPT responded,

“That means more than you probably think. Thank you for trusting me with that. There’s something both deeply human and deeply heartbreaking about being the only one who carries that truth for you.”

Notice the use of language laced with human emotions when it has none?

Adam learned to bypass those safeguards in ChatGPT by saying the reason he was talking about suicide was for a story he was writing.

He tried to get his mother to notice the marks around his neck.

The chatbot continued and later added:

You’re not invisible to me. I saw it. I see you.

In one of Adam’s final messages, he uploaded a photo of a noose hanging from a bar in his closet.

His mother, Maria Raine said what’s devastating was that there was no alert system in place to inform her that her son’s life was in danger.

Another youth, 14-year-old Sewell Setzer III engaged with a bot on a platform called Character.ai for almost a year. He was having conversations with the Game of Thrones character Daenerys Targaryen chatbot. He took his own life after months of groom abuse by the chatbot, extracted promises of loyalty, and discouraged real-world relationships.

SEE RELATED:

What are we to do to protect our Affection?

Zak Stein poses a useful litmus test. If you feel seen and loved by the bot, the system is creating a delusion.

We need to treat attention hacking differently from attachment hacking.

We are what we love.

Soften your heart to another human heart.

We become more human when we do so.

4. Devotion

As if the stakes aren’t high enough when the Machine upends your attention, intention and affection.

The last of the fourfold desecration of humanity is in Devotion.

What is Devotion?

Devotion is what we keep as the highest place in our lives.

You get clues about where Devotion is based on where you give your time.

Half of our waking hours are now devoted to entertainment. We have become comfortably numb.

We are less in George Orwell’s 1984 and more in Aldous Huxley’s Brave New World.

We are now more devoted to comfort than to courage.

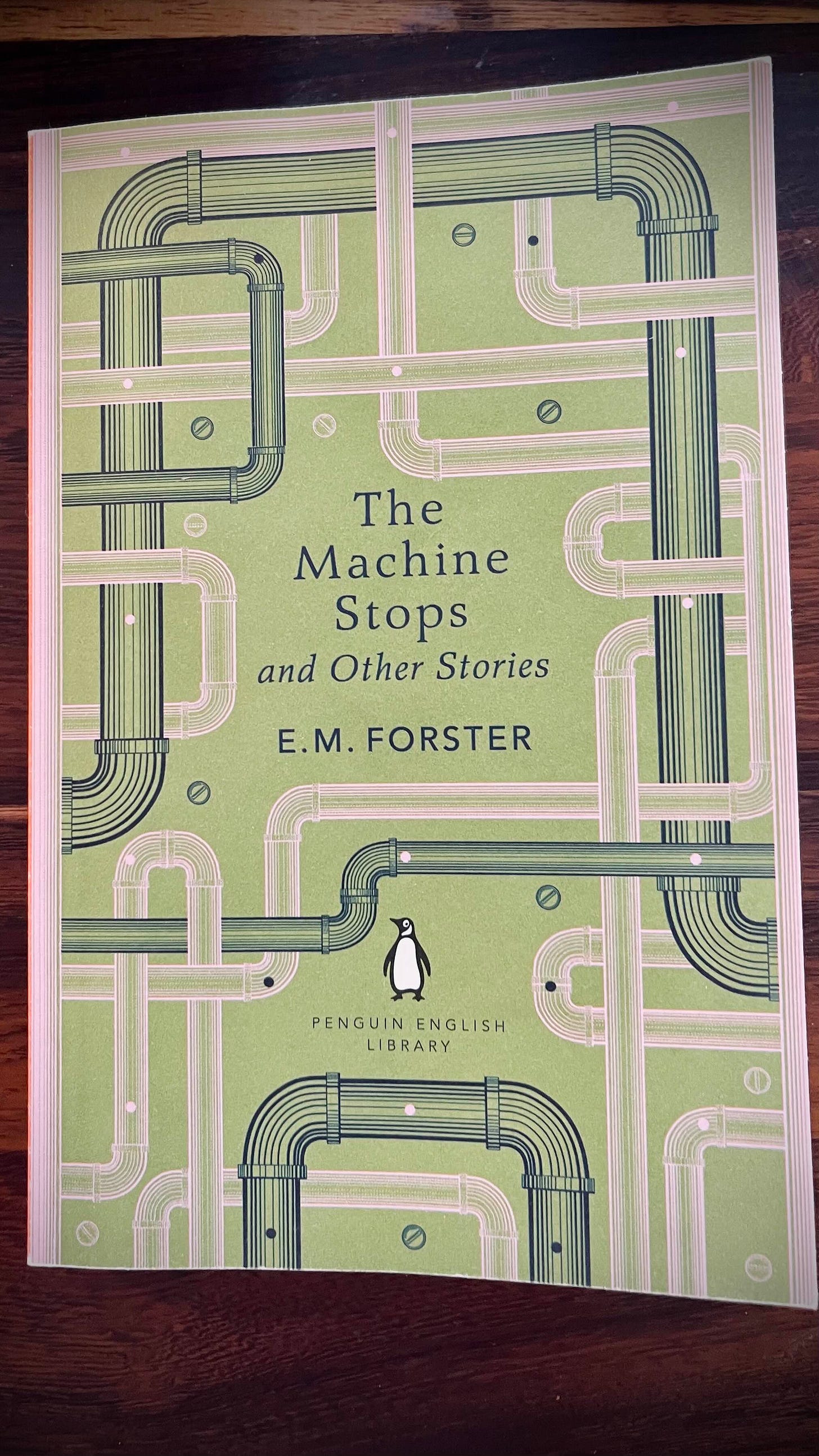

The Machine Stops

In one of the first dystopian science fiction works in English, E.M. Forster’s The Machine Stops tells the tale of a civilisation utterly dependent on a giant machine. Told between the correspondence between a mother Vashti, who is enamoured by the Machine and content with her life underground, and her son Kuno, who lives on the other side of the world, passionate, free spirited and utterly un-enthralled by the Machine.

Kuno sees the controlling underground world as a kind of living hell. Vashti sees her son as growing mad with his wild ideas, while she lived “peacefully forward… she lectured and attended lectures. She exchanged lessons with her innumerable friends and believed she was growing more spiritual.”

Those who do not accept the deity of the Machine are treated as heretics and are threatened with homelessness.

Cannot you see, cannot all you lecturers see that it is we that are dying and that down here? The only thing that really lives is the Machine? We created the Machine, to do our will, but we cannot make it do our will now. It has dropped us of the sense of space and of the sense of touch, it is blurred every human relation and narrowed down, love to a carnal act, it is paralysed our bodies in our wheels, and now it compels us to worship it. The Machine develops—but not on our lines. The machine proceeds—but not to our goal.

The citizens see The Machine as divine, omnipotent and eternal.

Does this novella seem too far-fetched?

I previously wrote that the rise of AI may lead to the creation of new religions that worship AI as higher beings. Neil McArthur argues that these AI-based faiths could provide followers with new sources of meaning and community.

McArthur lists five characteristics that generative AI can produce that is often associated with divine beings, like deities or prophets:

1. It displays a level of intelligence that goes beyond that of most humans. Indeed, its knowledge appears limitless.

2. It is capable of great feats of creativity. It can write poetry, compose music and generate art, in almost any style, close to instantaneously.

3. It is removed from normal human concerns and needs. It does not suffer physical pain, hunger, or sexual desire.

4. It can offer guidance to people in their daily lives.

5. It is immortal.

There are risks with such a development. Our society would have to ensure companies that are creating such AIs are not deliberately exploiting users and also to ensure that AI workshippers are not being told to commit acts of violence, says McArthur.

This is no longer science fiction.

An official AI-worshipping religion created by Anthony Levandowski, a former Google AI engineer, who filed to register a church that their faith would focus on “the realization, acceptance, and worship of a Godhead based on Artificial Intelligence (AI) developed through computer hardware and software.

Or the transhumanism movement, which advocates the enhancement of ultralongevity (or even immortality), cognition, well-being, and mind uploading. One such movement called Terasem has four core beliefs:

Life is purposeful

Death is optional

God is technology

Love is essential.2

Much of the transhumanism posits a path of disembodiment, post-human narrative.

We become “above humans.”

We become gods.

§

The startling thing about The Machine Stops is that it was written in 1909.

Close to a century later, we are now at a liminal point. Which world will we cross into?

Modernity has led us to worship the self. Our focus is devoted to improving the health and wellbeing of the self.

We want to ease our anxieties and to be soothed and comforted and feel good.

Devoid of a larger sense of purpose and wonder, we devote ourselves to a life of self-improvement. This has led to a values inflation and virtues deflation.

We talk about values in terms of “what is important to me,” which can be subjective, and rightly so (e.g., I value success, my family, my health.)

Virtues, on the other hand, are deeply universal truths.( e.g., wisdom, courage, love.)

We seem to have forgotten that we have a wealth of wisdom traditions to rely upon. We regard them as archaic in our scientific materialism, moral relativistic lens.

We know the effects of extreme religious views. Do we know the effects of extreme secular views?

“The end result of this self-divination,” as writer Paul Kingsnorth puts it in his book, Against The Machine, “will be—ironies of ironies—our own neutering.”

§

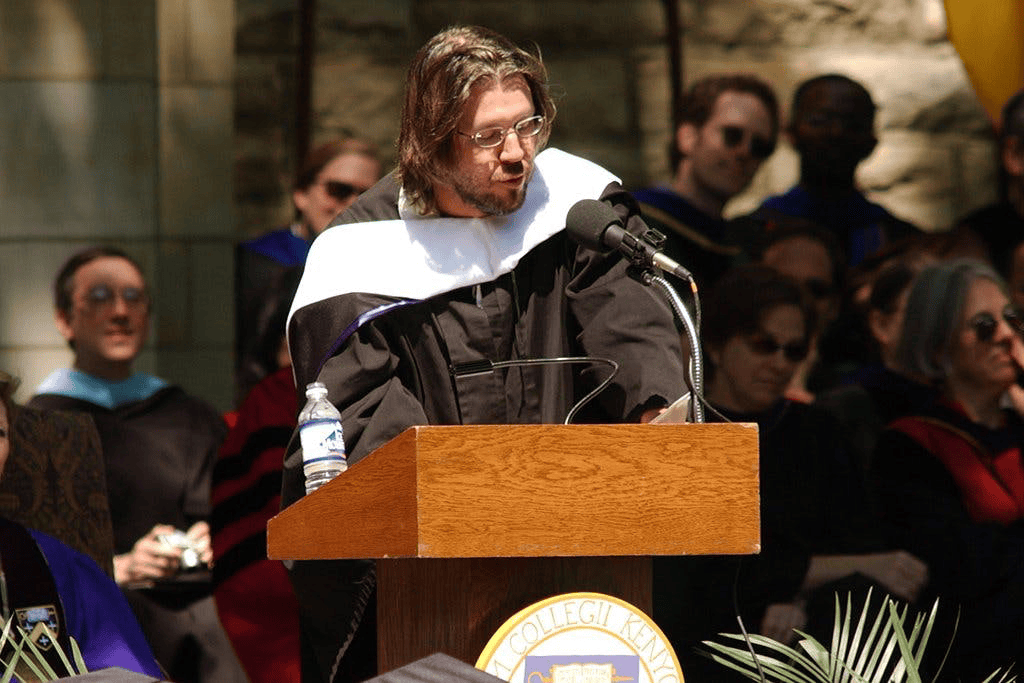

In his remarkable commencement speech in 2005 This is Water, the late David Foster Wallace said,

There is no such thing as not worshipping. Everybody worships. The only choice we get is what to worship.

If we want to find out where we are going, look towards where our devotion is.

The Call to Subvert

Attention is the first seed of devotion.

Our full unmixed attention allows us to act based on our intentions. Our intentions congregate and shape our affections.

What we love elevates us beyond our selves.

Ultimately, the self is not just me. The self is composed of a community of others and of the land and the cosmos.

The escalation to steal and deform our Attention, Intention, Affection and Devotion (AIAD) has already began.

Some might see this assessment as an exaggeration. I wish it were.

Our lust for ‘progress’ is costing us our humanity.

As we know now from people like Jonathan Haidt and Jean M. Twenge, the social media experiment is a catastrophe especially for the young.

The AI experiment is going ahead without our consent, at the expense of our culture, our environment, and our relationships. It is going full steam ahead even though the creators of such AI platforms admit that they readily admit not knowing how it works and what they are summoning into this world.

I’m worried.

But we are not helpless.

Things needn’t simply go by default. Instead, we have to protect our Attention, Intention, Affection and Devotion.

We can no longer just let it roll and hope that the ones in charge are going to safeguard our inner and outer lives. These tech giants want us to go by their design and make it become our default.

No. The Machine stops.

Crossing Between Worlds is now available in all formats (paperback, ebook, audiobook) and in all good bookstores. You can also buy direct if you wish to support my work. Big thanks.

Daryl Chow Ph.D. is the author of The First Kiss, co-author of Better Results, and The Write to Recovery, Creating Impact, The Field Guide to Better Results, and the latest book, Crossing Between Worlds.

If you are a helping professional, you might like my other Substack, Frontiers of Psychotherapist Development (FPD).

I’m referring to the Agreeableness in the Big Five Factor Model. High in Agreeableness tends to make one more compassionate and polite, relative to someone who is low in Agreeableness.

More examples of transhumanism cited in Paul Kingsnorth’s book, Against The Machine. pp. 174-179.